Challenge

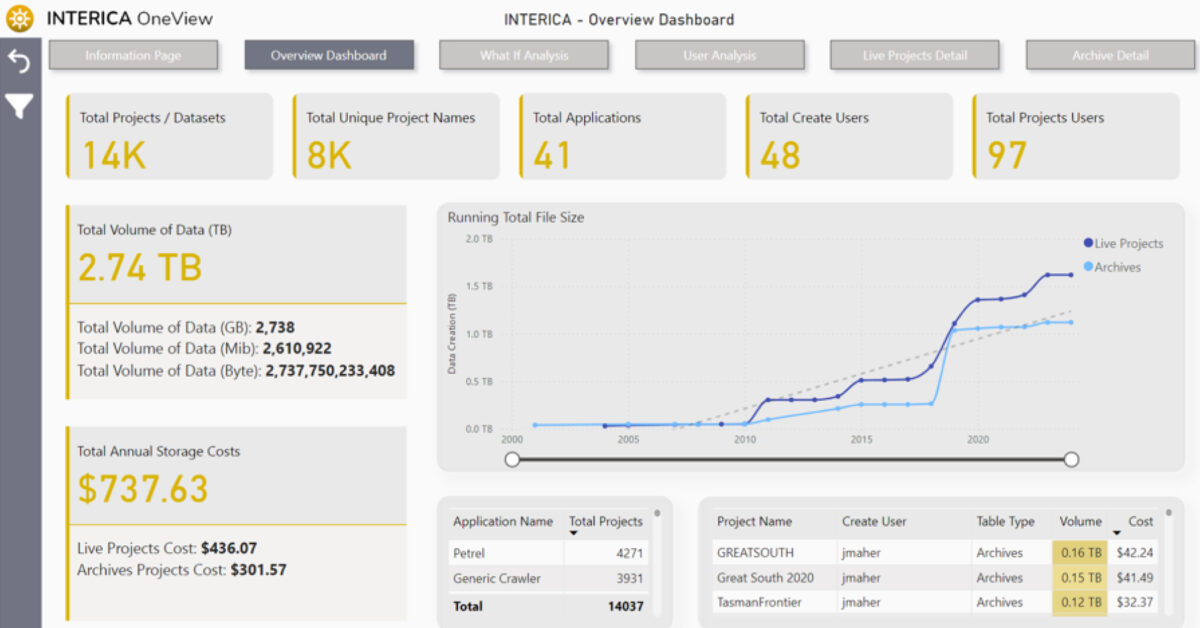

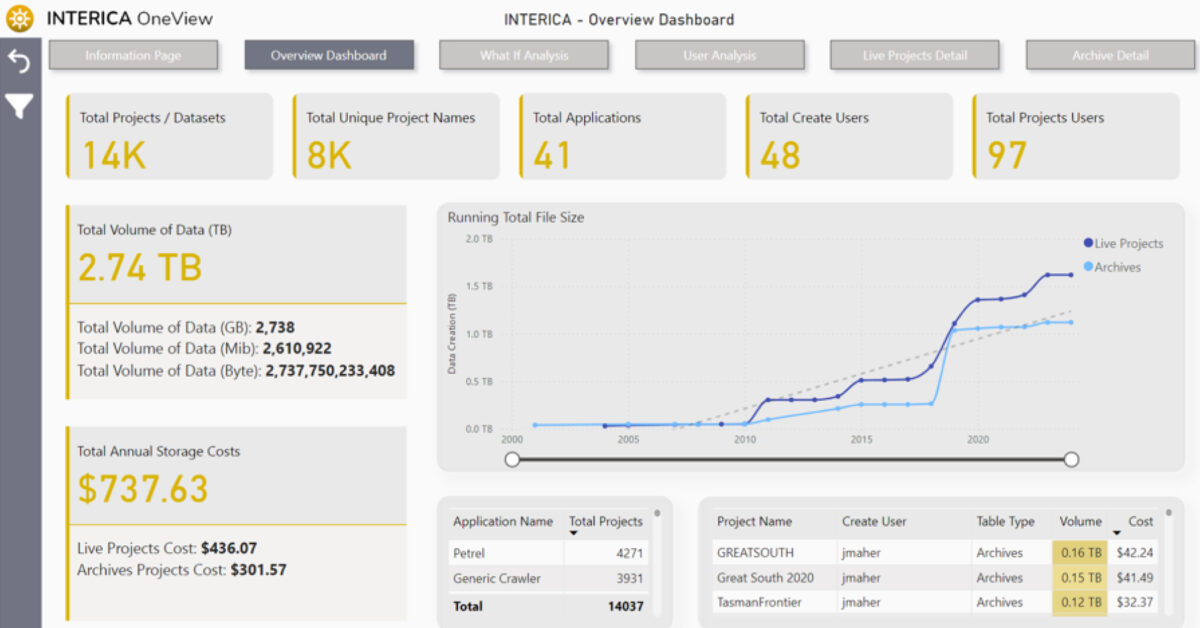

A mid-size energy company faced an escalating storage crisis. Over time, its subsurface and project data had grown to nearly 5 petabytes, spread across Windows and Linux environments and multiple applications. Annual storage management costs exceeded $7.5 million, and the sheer volume of data was blocking its cloud migration plans. Duplicate datasets, inactive project files, and unstructured archives inflated infrastructure costs and slowed performance.

Solution

The company deployed Interica OneView™ (IOV) to gain full visibility and control of its data estate. IOV rapidly scanned and indexed data across both operating systems, identifying redundant and inactive files across seismic and application domains. Using policy-based archiving, the team safely migrated 3 petabytes of data to low-cost AWS cold storage, while retaining full traceability and metadata visibility through Power BI dashboards. Asset teams could still search, locate, and request archived data through IOV’s intuitive interface, maintaining accessibility without high active storage costs.

Result

Within the first year, the organisation achieved dramatic improvements in both cost and efficiency:

- Storage footprint reduced by 60% through duplicate detection and archiving.

- Annual savings of over $4 million, including avoided costs from future growth.

- Successful cloud migration achieved with minimal disruption.

- Improved data accessibility via Power BI integration and structured metadata. IOV’s automated discovery and archiving workflows helped transform the company’s sprawling data environment into a manageable, cost-efficient system — proving that visibility and governance directly drive financial performance.

Challenge:

A land acquisition contractor needed to deliver seismic field QC and initial processing under tight time and budget constraints. They faced frequent equipment changes, variable data quality, and limited processing resources at remote locations. Traditional tools couldn’t cope with the need for fast, flexible QC in the field while maintaining full traceability of results.

Solution:

Using Claritas, the contractor was able to deploy a portable, stable field QC and processing system that mirrored the workflows used in their main processing centre. The modular design of Claritas let them customise workflows to suit each acquisition environment and automate repetitive steps. Our technical team also provided targeted training, empowering field staff to confidently run QC and troubleshoot data quality issues on site.

Result:

The contractor built a reliable, self-sufficient QC workflow that reduced re-shoots and downtime, improved communication between the field and the office, and shortened project turnaround times—all while ensuring consistent seismic data quality across campaigns.

Challenge:

A European university research group developing new techniques for Minerals Exploration and Carbon Sequestration needed a seismic processing platform that could easily integrate with their proprietary algorithms and experimental workflows. Commercial software options often lacked the openness or flexibility for academic R&D, and licensing costs limited student access.

Solution:

Claritas provided the perfect balance of open architecture and academic accessibility. The research team used the open I/O and scripting capabilities of Claritas to interface with their own real-time QC systems, validating data from field tests of new sensors and imaging techniques. Through the Claritas Academic Site License, every MSc and PhD student in the program gained access to the same professional-grade processing environment used in industry.

Result:

Claritas helped the university accelerate its research pipeline—allowing rapid prototyping of new algorithms—and gave students hands-on experience with real seismic workflows. This strengthened collaboration between academia and industry and positioned the program as a hub for carbon storage innovation.

Challenge:

A marine geophysical survey contractor was expanding from single-channel to multi-channel seismic acquisition to meet growing demand for offshore wind and near-surface studies. They needed a processing system that was robust at sea, easy to deploy on vessels with limited connectivity, and flexible enough to run the same workflows in the office.

Solution:

By adopting Claritas, the company implemented a shared license model that let teams seamlessly switch software access between the office and offshore vessels—maximising utilisation while minimising cost. Claritas’s UHR (Ultra-High-Resolution) seismic modules provided the precise imaging tools needed for shallow marine interpretation. In addition, customised Claritas training ensured field and office teams quickly gained proficiency in multi-channel seismic processing.

Result:

The contractor now delivers higher-quality data faster, using consistent workflows from vessel to final report. The ability to process UHR seismic data in-house has opened new revenue opportunities and improved control over project schedules and deliverables.

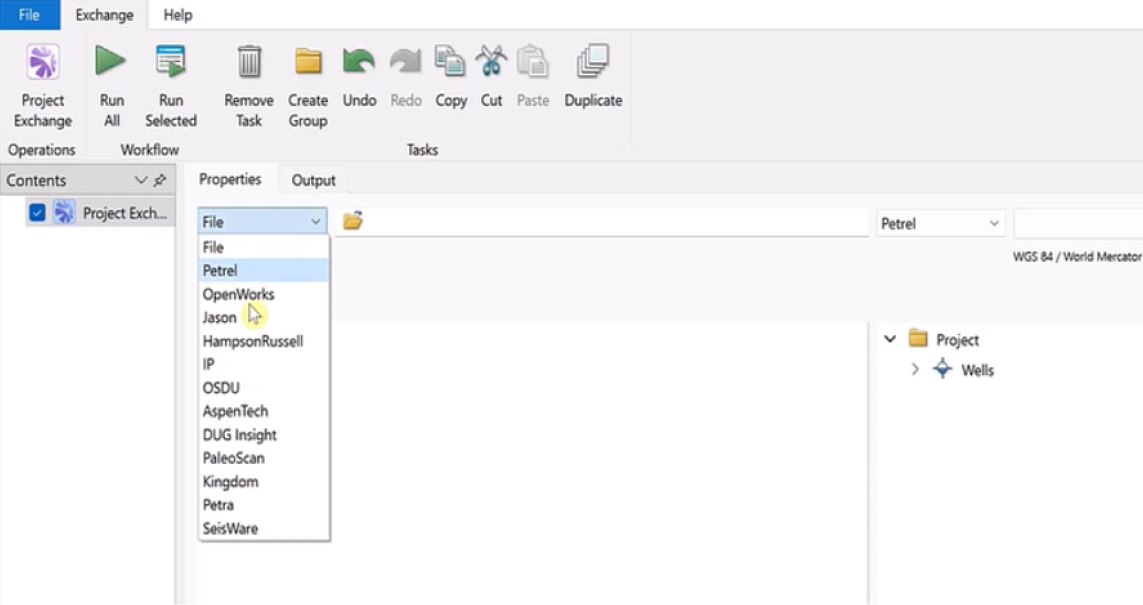

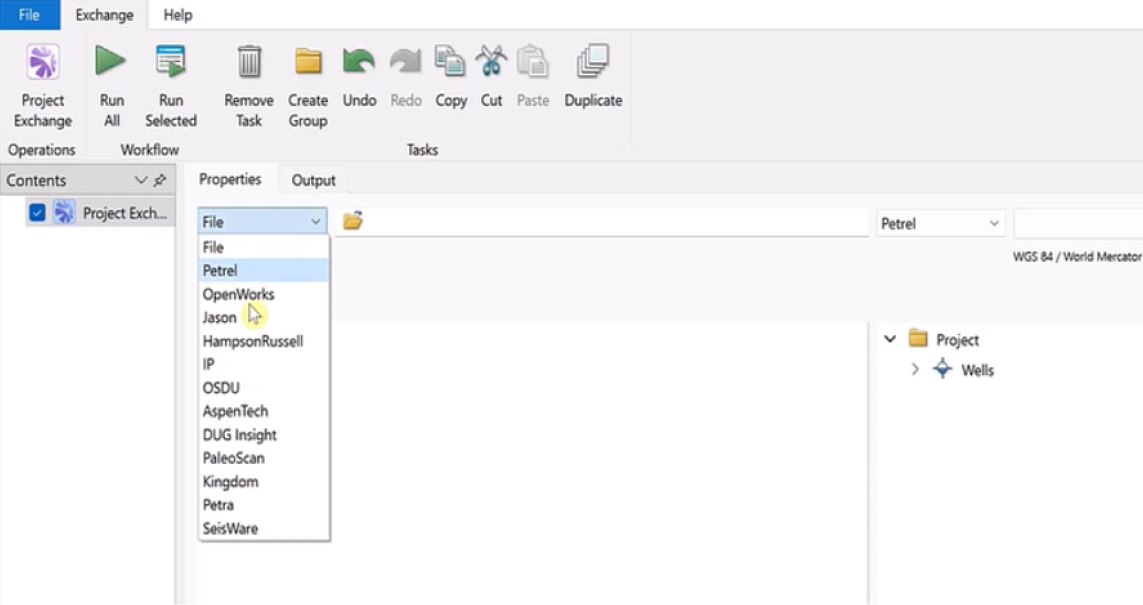

A global energy company wanted to align its subsurface data workflows with the OSDU™ Data Platform as part of a wider cloud and digital transformation strategy.

While the corporate data team had implemented an OSDU environment, day-to-day operations still relied heavily on desktop applications like Petrel™, OpenWorks, and ArcGIS. The company needed a reliable, secure way to connect these systems so that users could easily access and update data across both traditional and cloud-based platforms.

Challenge

- Legacy interpretation tools were isolated from the new OSDU data platform

- Manual data exchange led to duplication, version mismatch, and delays

- Custom integration scripts were difficult to maintain and resource-intensive

- Subsurface teams needed to access trusted OSDU data directly within their existing workflows

Solution

Exchange was deployed as a configurable integration layer connecting the OSDU environment with the company’s core subsurface applications.

Using Exchange, the company could:

- Read and write well, seismic, and spatial data between OSDU, Petrel™, and OpenWorks

- Filter and organize data transfers by region, project, or dataset type

- Automate workflows to keep information synchronized between on-premise and cloud systems

- Empower users to manage connections without requiring custom code or complex IT intervention

Result

- Seamless interoperability between OSDU and existing subsurface tools

- More consistent, reliable access to shared datasets across disciplines

- Reduced dependency on manual imports and ad-hoc scripts

- A scalable integration framework supporting future digital initiatives

Key Takeaway

Exchange makes digital transformation practical by connecting the subsurface tools geoscientists already use with emerging data platforms like OSDU.

The result is a unified, governed environment where data moves freely, and teams can focus on insight, not integration.

GeoSoftware, a leading provider of advanced seismic reservoir characterisation technology, saw increasing demand from its customers for OSDU-aligned workflows. While many operators were moving toward cloud-based data ecosystems, day-to-day seismic interpretation and inversion work still depended on desktop environments like HampsonRussell and Jason.

To meet customer expectations and to avoid diverting engineering resources from core product development, GeoSoftware needed a reliable, proven way to bring OSDU interoperability directly into its applications.

Challenge

- Customers wanted seamless OSDU integration without changing how they worked

- HampsonRussell and Jason needed access to trusted OSDU well data within existing workflows

- Building and maintaining custom connectors internally would be costly and slow

- GeoSoftware required a solution that aligned with OSDU standards while preserving product stability

Solution

GeoSoftware embedded Exchange as a native integration layer within both HampsonRussell and Jason

Using Exchange, GeoSoftware was able to:

- Connect directly to the OSDU Data Platform for secure read/write access to well data

- Ensure interoperability between OSDU datasets and existing seismic interpretation workflows

- Deliver integration capabilities without re-architecting core product components

- Offer customers OSDU-ready functionality with minimal disruption

Result

- Seamless OSDU-aligned workflows inside HampsonRussell and Jason

- Faster access to trusted well data and improved workflow consistency

- A significant reduction in internal development effort

- More engineering capacity for advancing high-value seismic and reservoir analysis features

Key Takeaway

By embedding Exchange, GeoSoftware delivered OSDU-compliant capabilities quickly and confidently, without sacrificing focus on its core technology.

Exchange provided the integration layer, while HampsonRussell and Jason continued to deliver the science

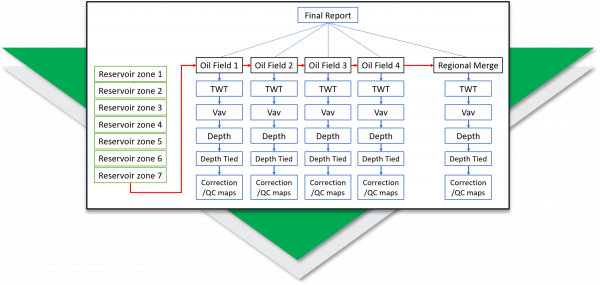

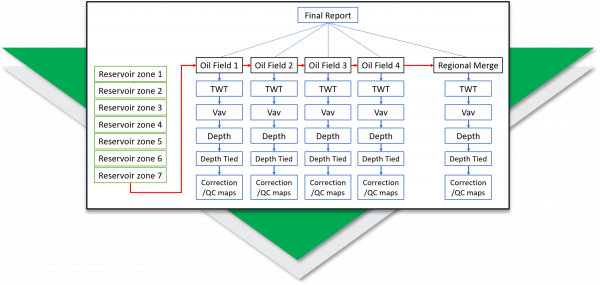

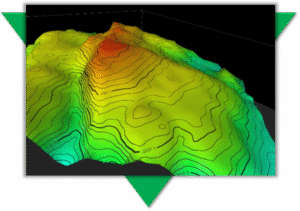

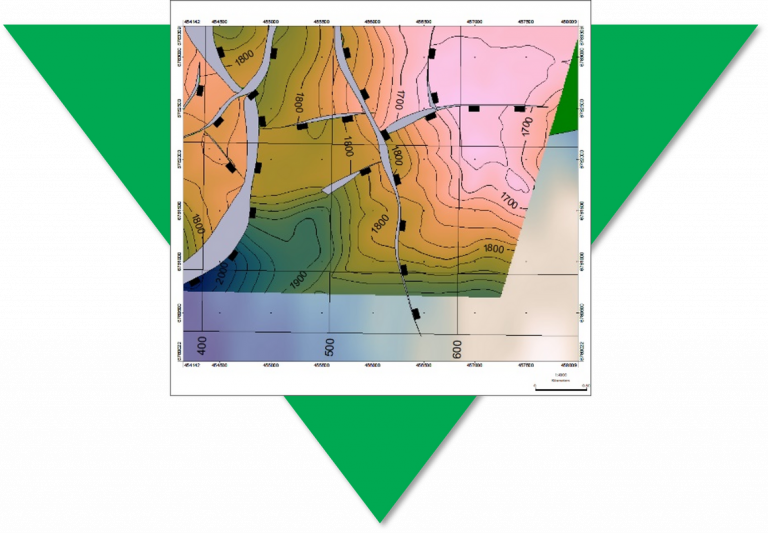

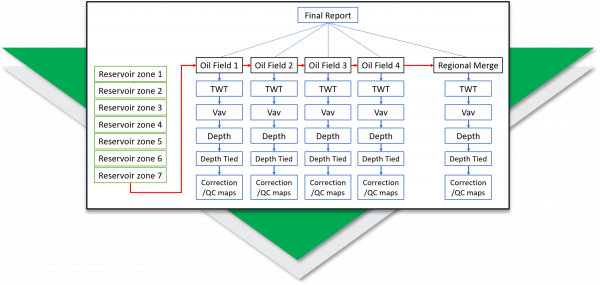

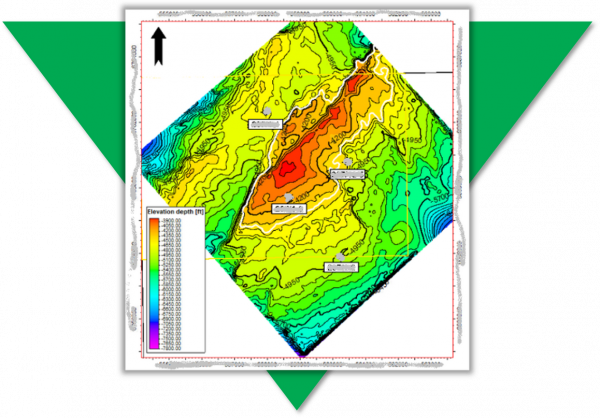

Regional Mapping of Key Reservoir Horizons

A regional exploration team at a large NOC needed to map key reservoir horizons across four producing oil fields, that while geographically adjacent to each other, were for a period of more than 30 years developed separately, where there was no exchange of knowledge between the separate Asset Teams.

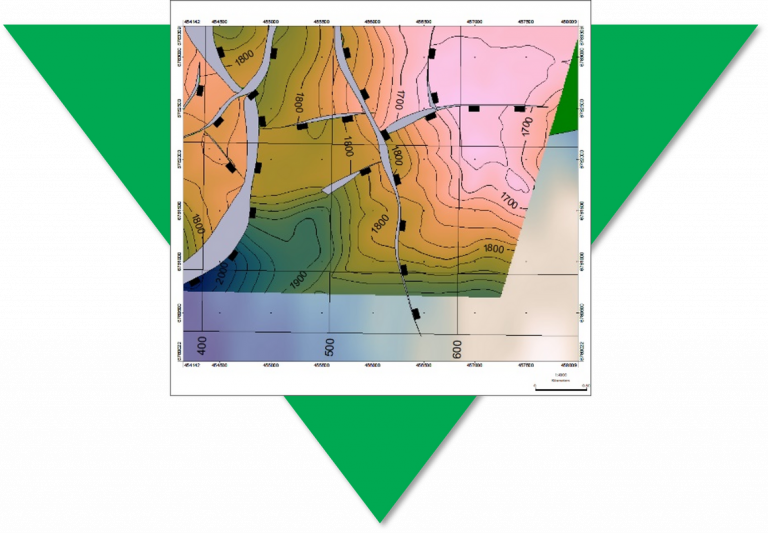

A small team of Petrosys Group consultants were deployed on rotation to install PRO software, to establish the data exchange links to third party applications, and to develop the workflows to build the regional maps for seven key horizons.

Drawing on the final TWT interpretation and the Velocity data from each of the four Assets, intuitive and interactive workflows were built to generate each Assets well-tied depth horizons and regionally merged and well-tied depth horizons.

In addition to creating the regional maps for the seven merged and well-tied depth horizons the project delivered:

- a documented workflow ensuring the repeatability as new interpretation became available

- the optional delivery of the product depth grids back into the preferred interpretation solution

- knowledge transfer in both domain expertise and future workflow configuration

The value of the Petrosys Group Consultant on this project was:

- domain expertise delivering results quickly by addressing the identified technical challenges

- a complete catalogue of maps for the seven key events, with the capability to reproduce and update them at any time

- staff were able to focus on their areas of expertise but able to adapt and incorporate new best practices for when it was required again

The challenge:

Map key reservoir horizons from multiple oil fields all operated by different teams with consistency.

The Technical Objectives:

- Install and configure an advanced mapping tool (PRO) to connect directly to data across 4 asset teams

- Connect to seismic and well interpretation where available (TWT, Vav, Depth) for 7 reservoir zones across the 4 asset teams

- Create TWT maps, Vav maps, depth convert surfaces, tie to well data, and analyse correction/QC maps

- Adjust models as necessary and re-run

- Merge all maps into a regional model

The Deliverables:

- 175 maps

- Report and presentation documenting the process and the results

- Re-usable workflow which can be re-run as interpretation is updated

- Training on the software and the workflow

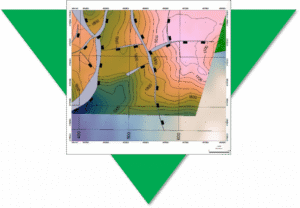

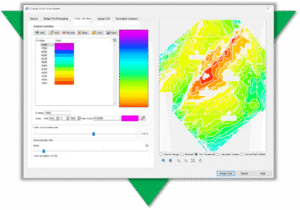

Quickly Convert Legacy Data to Digital Formats

A new entrant to a mature basin needed to gather surface and fault data from many images and scanned maps. Traditional digitising was slow, boring, and unpopular with the team, but attempts to outsource it had resulted in error prone data being returned. Useful knowledge was being excluded because it was too difficult to capture. It was clear they needed a way to quickly convert their legacy data.

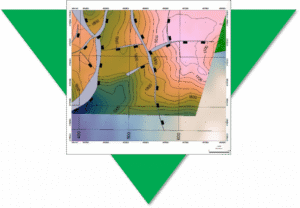

PRO has tools to georeference images; convert rasters to grids; automatically trace lines; edit grid and vector data. Geoscientists can quickly convert images without getting repetitive strain and own their data making any necessary edits to produce high quality results that match their expectation and knowledge.

The Challenge

Traditional digitising is time consuming and laborious – took over 3 hours

The Solution

Using PRO – converted and QC’d geoscience data in 25 minutes

The Result

PRO writes data directly back to interpretation systems for further modeling

Fast. Accurate. Relevant.

With a fast and accurate process, much more relevant data is available for assessment. The outputs are properly positioned in all dimensions and are easily shared with geoscience and GIS software, adding context and value to recent interpretation.

“We used to make do with using images as background pictures, but being able to properly incorporate surfaces or contours with z-values has changed the way we work.” TF, Geoscience Data Coordinator

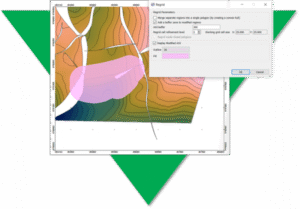

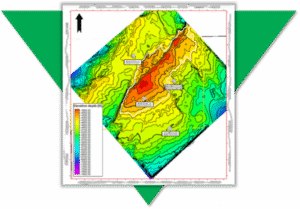

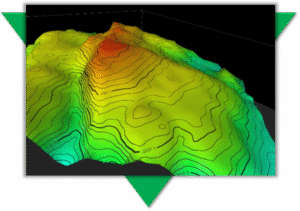

Improve the Geological Accuracy of your Subsurface Maps

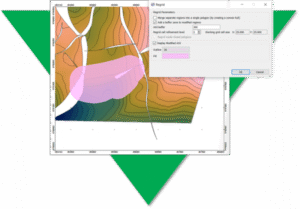

A new venture exploration team needed to map prospects in a highly faulted area. They knew the faults were present in their area of interest, but some were not being picked up by auto-tracking software – it became clear that to get what they wanted they would have to improve the geological accuracy of the subsurface maps. The exploration team ran PRO to generate a subsurface grid based on a 3D seismic surface and fault sticks, then took advantage of the advanced grid editing tools to reinterpret a fault zone based on their specialist understanding of the area.

Many gridding operations provide a mathematical solution to a geological problem. We have ways of modeling trends and bias using geostatistical methods, yet the quality of a subsurface map is only as good as the input data.

Poor or incomplete datasets can lead to the creation of unrealistic surfaces, which can lead to poor economic decisions.

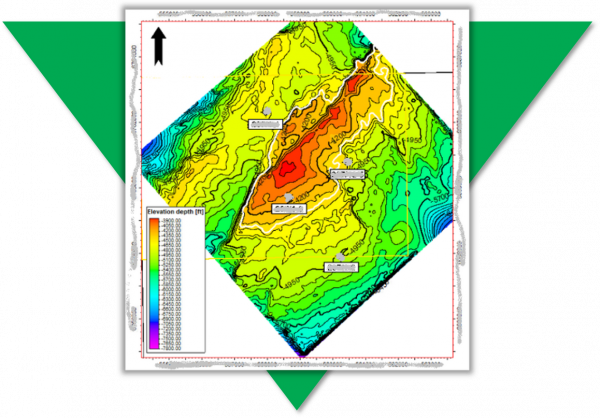

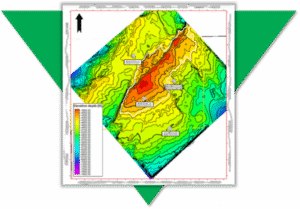

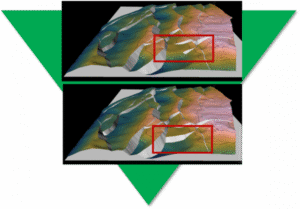

The Challenge

Due to missing input data, the resultant fault polygon has been terminated at its heave maxima. The adjacent contours highlight that this is unrealistic and the isolated fault should be extended to merge with the major N-S trending fault as a splay

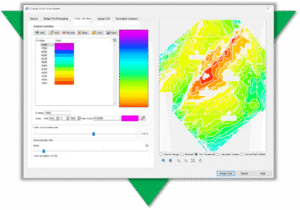

The Solution

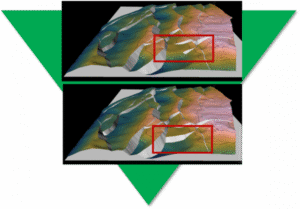

In PRO, users have access to advance and easy-to-use grid editing tools, which allow them to edit the faults and contours embedded within a 3D surface. Any edits are then used to control a localised re-grid of the 3D structure and fault network.

The Result

3D grid of the top reservoir showing the fault network before (top) and after (base) the geoscientist has reinterpreted the fault network

Geological Accuracy

The grid editing process allows the geoscientist to correct for geological inaccuracies and manipulate a surface where input data is missing. The tools provide a fast and effective way of generating a structurally-sound model, which has implications for hydrocarbon storage and compartmentalisation.

“I’ve just come out of an internal peer review and the reinterpretation of our maps have led to identification of two more prospects.”

Geoscientist, Oil Company”